Quite frankly, I am not impressed by the GPU support. The DNN module supports Intel GPUs with the OpenCL backend. The point that it was much faster than the Halide version! So, we are better off using the reference C++ implementation. There was an effort to make the DNN module faster using Halide but one fine day Vadim Pisarevsky optimized the reference CPU implementation to Currently, the DNN module supports a few different backendsĪll results shown in this post used the reference C++ implementation. I learned from Dmitry that the DNN module was started as part of Google Summer of Code (GSOC) by an intern Vitaliy Lyudvichenko who worked on it over two summers. So here is some inside knowledge I acquired from Dmitry. He was kind enough to give me a quick overview of the DNN module. I had a call with Dmitry Kurtaev who is part of the core OpenCV team and has been working on the DNN module for about 2 years.

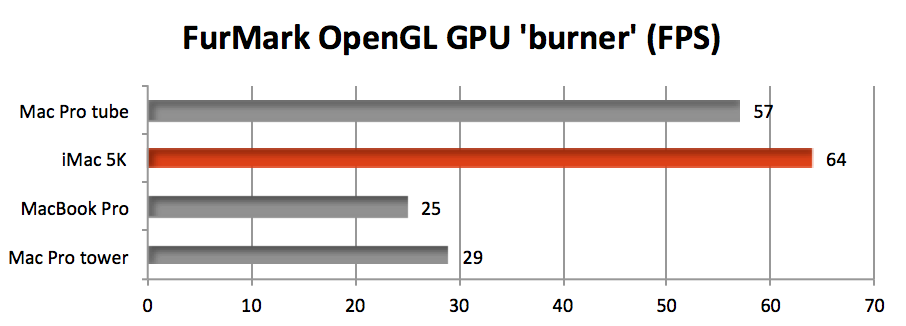

Therefore, Intel has a huge incentive to make OpenCV DNN run lightning fast on their CPUs.įinally, the huge speed up also comes from the fact that the core team has deep optimization expertise on Intel CPUs. Nobody uses Intel processors to train Deep Learning models, but a lot of people use their CPUs for inference. The core OpenCV team is therefore at Intel.Īs far as AI is concerned, Intel is in the inference business. For a while, an independent company called Itseez was maintaining OpenCV, but recently it was acquired by - no points for guessing - Intel. If you are new to OpenCV, you may not know OpenCV started at Intel Labs and the company has been funding its development for the most part. Now, which company is the top CPU seller in the world? Intel of course. This has been a huge win for NVIDIA which has benefitted from the AI wave in addition to the cryptocurrency wave. Consequently, the GPU implementation of all Deep Learning frameworks (Tensorflow, Torch, Caffe, Caffe2, Darknet etc.) is based on cuDNN. In 2007, they released CUDA to support general purpose computing and in 2014 they released cuDNN to support Deep Learning on their GPUs. The company was very smart to realize the importance of GPUs in general purpose computing and more recently in Deep Learning. Which company is the top GPU seller in the world? Yup, it is NVIDIA. In corporate America, whenever you see something unusual, you can find an answer to it by following the money. Why is OpenCV’s Deep Learning implementation on the CPU so fast? - The non-technical answer The reference implementation took 25.45 seconds while the OpenCV version took only 3.598 seconds. We compared the reference implementation of OpenPose in Caffe with the same model imported to OpenCV 3.4.3. We show how to import one of the best Pose Estimation libraries called OpenPose into an OpenCV application. If you have not read our post about Human Pose Estimation, you should check it out. In the problem of Pose Estimation, given a picture of one or more people, you find the stick figure that shows the position of their various body parts. Remember, these are both CPU implementations. The OpenCV version ran at an impressive 50 ms per frame and was 6x faster than the reference implementation. We compared the GOTURN Tracker in OpenCV with the Caffe based reference implementation provided by the authors of the GOTURN paper. However, the underlying architecture is based on the same paper. Now, this is not an apples-to-apples comparison because OpenCV’s GOTURN model is not exactly the same as the one published by the author. For this, we chose a Deep Learning based object tracker called GOTURN. The third application we tested was Object Tracking. When compiled with OpenMP, Darknet was more than twice as fast with 12.730 seconds per frame.īut OpenCV accomplished the same feat at an astounding 0.714 seconds per frame. Yes, that is not milliseconds, but seconds. If you are not familiar how to do this, please check out our post on Object detection using YOLOv3 and OpenCVĭarknet, when compiled without OpenMP, took 27.832 seconds per frame. The comparison was made by first importing the standard YOLOv3 object detector to OpenCV. The second application we chose was Object detection using YOLOv3 on Darknet. Keras came in third at 500 ms, but Caffe was surprisingly slow at 2200 ms. PyTorch at 284 ms was slightly better than OpenCV (320ms). The results are shown in the Figure below. We used the pre-trained model for VGG-16 in all cases. The first application we compared is Image Classification on Caffe 1.0.0, Keras 2.2.4 with Tensorflow 1.12.0, PyTorch 1.0.0 with torchvision 0.2.1 and OpenCV 3.4.3. Thanks to Vishwesh Ravi Srimali and Vikas Gupta who performed the experiments listed in this post.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed